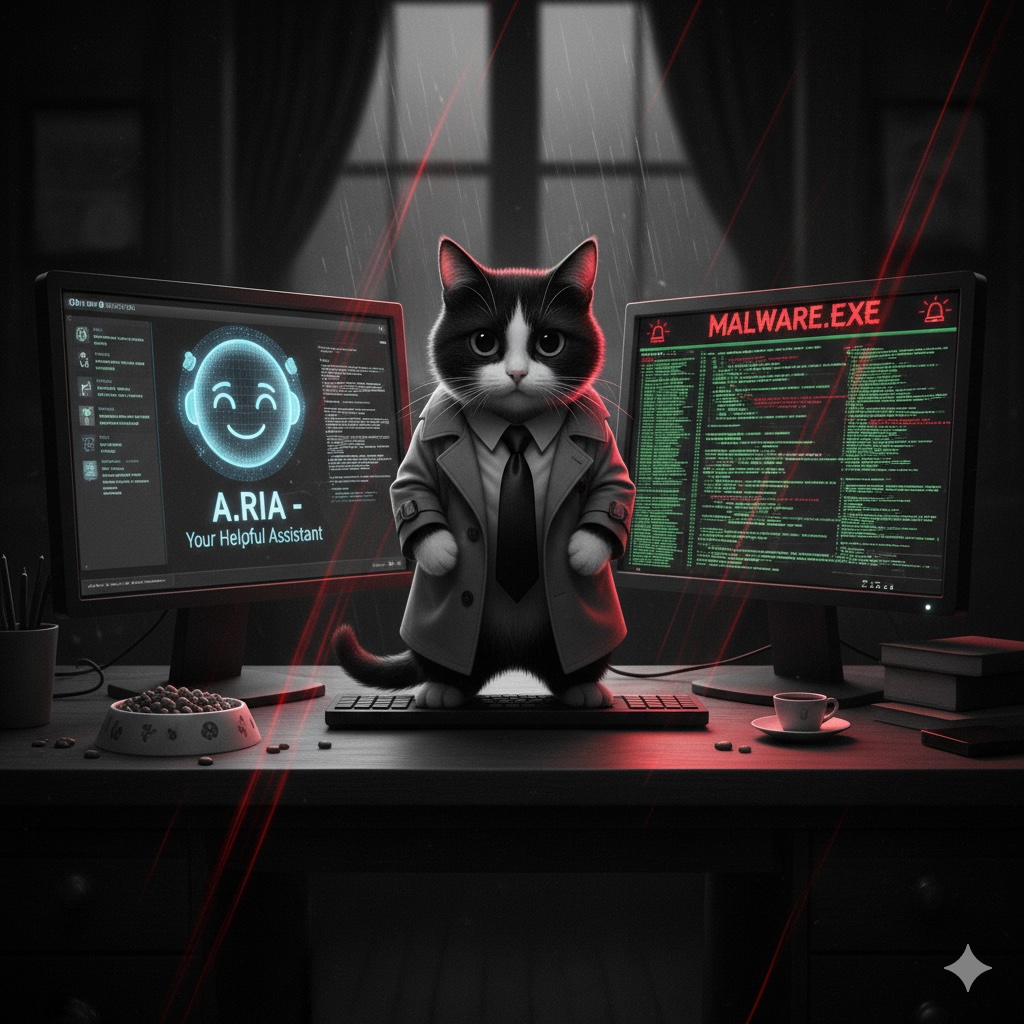

GenAI and “Vibe Coding”: How to secure the new “Shadow IT”

GenAI, with coding agents and the vibe coding options offered, will facilitate the emergence of EUD (End-User Development) type applications. Shadow IT risks growing stronger once again, and with it, shadow GenAI. This proliferation creates a new application attack surface that security teams are still struggling to control.

To help structure the thinking around these new dangers, the OWASP community has published the Top 10 for Large Language Model Applications. This framework becomes essential for mapping threats. In this context, how can we ensure that these models, agents, and RAG pipelines — often developed outside traditional IT channels — are effectively protected? The question is complex and must be addressed through a multi-layered approach.

The collaborative approach

Just as with traditional EUDs, forbidding and threatening rarely yields positive results. Indeed, you will always have an intern who doesn’t know what they are doing, a business line with a vital need for their Access application, or another exception that will expose a significant risk. Conversely, another approach is possible: encouraging this type of development, but within a controlled framework. In concrete terms, this means offering support to these business lines in terms of vulnerability detection, Threat Modeling (based on the OWASP LLM framework), or hardware infrastructure. Thus, we facilitate the discovery of these applications and improve our ability to act.

In my opinion, the simple internal consumption of an LLM is of little interest to a business line. However, what will bring a lot of value and therefore generate a lot of development is the connection of this LLM with corporate documents and internal tools like CRM or ITSM. This is what AI agents offer: the ability to connect to tools to take action.

Identity-based control

Connecting to internal tools cannot be done without identity, and this is an ideal way to catch up with projects that have not embarked on the collaborative approach. Indeed, the identity of internal tools is generally controlled via formal processes, and the definition of a non-human account to consume these tools should systematically trigger an analysis of the AI context.

With the publication of the MCP (Model Context Protocol) by Anthropic, the market has seen the emergence of solutions to consume tools rigorously, securely, and in a controlled manner. This is how we now have proxies, gateways, or dedicated catalogs for MCP servers consumed within the company. A priority for companies wishing to invest seriously in internal agent development is therefore to set up a specific, easily accessible infrastructure for MCP servers. This centralized approach is a direct response to the OWASP LLM06 (Insecure Plugin Design) and LLM07 (Excessive Agency) risks, ensuring that connections are authenticated and agent permissions are strictly controlled.

Perimeter protection and DLP

Of course, you will never prevent an intern from wanting to consume an AI service one day without mentioning it to anyone. The most effective method is certainly to implement perimeter protection with specific CASB/SASE tools. These tools go further than firewalls by analyzing the applications consumed via Internet communications. Notable players in this market include Netskope, Zscaler, or of course Microsoft Defender for Cloud Apps. To be as effective as possible, I recommend tools that allow SSL decryption of traffic. These perimeter controls are fundamental to addressing the LLM05 (Sensitive Information Disclosure) risk, by preventing the exfiltration of confidential data to unapproved models.

Note that several players in the firewall world are currently strengthening their catalogs with AI application security products, such as Palo Alto Networks (with its AI-Runtime Security solution, or AIRS). The market is very dynamic right now.

The second layer of prevention would logically be at the endpoint level. However, I do not recommend focusing too much on this area today. Indeed, with an EDR and a DLP tool like Microsoft Purview, you should already be partially protected. My feeling, on the other hand, is that by design, these tools will have a lot of difficulty maintaining a high level of protection in this area, so I would consider them more as allies (also contributing to the management of the LLM05 risk) than as leading actors.

Conclusion

These solutions will allow you to secure the environment in which your AI initiatives will take place. However, they do not secure your agents themselves against risks such as OWASP LLM01 (Prompt Injection), nor against supply chain risks like downloading malicious LLMs from the Web, for example. This type of threat will be addressed in another article focusing on what is called runtime protection for AI Applications.