Privileged AI Agents: A double-edged sword

The growing integration of artificial intelligence agents into our information systems promises unprecedented efficiency and automation gains. However, when these agents are granted elevated privileges — access to sensitive data, the ability to execute critical commands, or interact with vital infrastructure — they become a prime target and a major point of vulnerability. This article explores the inherent risks of highly privileged AI agents and, drawing on modern security principles and Meta AI’s recommendations, proposes architectural strategies and practical measures to minimize these threats.

Understanding the threat model

The first step in securing AI agents is understanding the threat landscape.

Who are the attackers? Threats can emanate from internal malicious actors (disgruntled employees, human error) or external ones (cybercriminals, competitors, nation-states). Motivations vary: espionage, sabotage, financial fraud, or simple disruption.

What are the attack vectors? AI agents can be compromised in various ways:

- Software vulnerabilities: Exploitation of flaws in the agent’s code or its dependencies.

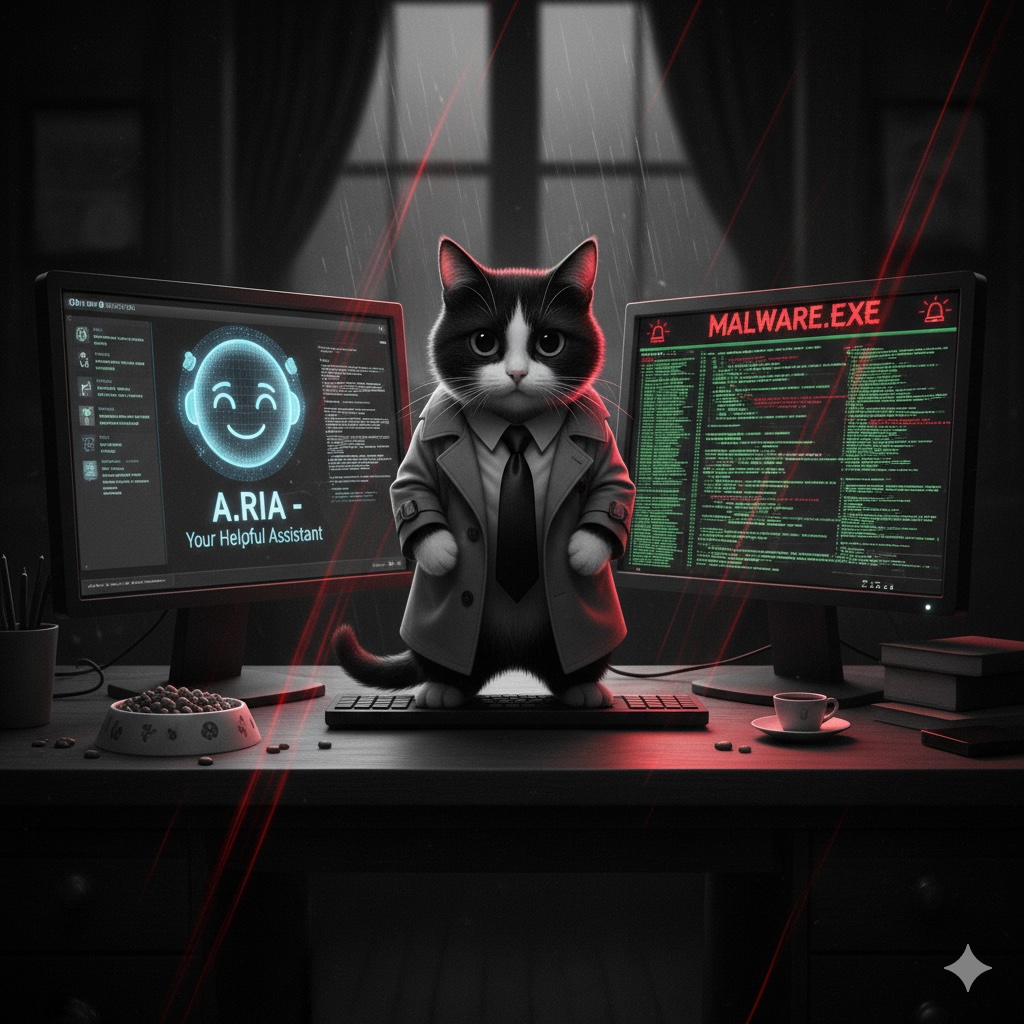

- Malicious prompt injection: Manipulation of the agent via specifically crafted instructions to make it perform unauthorized actions.

- Execution environment compromise: Attacks targeting the underlying infrastructure hosting the agent.

- Theft of API keys or access tokens: Obtaining credentials allowing an attacker to impersonate the agent.

- Denial of Service (DoS) attacks: Overloading the agent or its resources to disrupt its operation.

What are the consequences? A compromise can lead to severe consequences:

- Theft or exfiltration of sensitive data (personal information, trade secrets).

- Manipulation of critical systems (financial, industrial, healthcare).

- Interruption of essential services.

- Financial fraud or unauthorized transactions.

- Damage to the organization’s reputation.

Concrete examples of attacks and impact scenarios

To illustrate the severity of these risks, let’s consider a few scenarios:

- Inventory Management: An AI agent responsible for inventory management, granted write privileges to the database and access to ordering systems, could be manipulated. An attacker could inject a prompt to order excessive quantities of expensive products to a delivery address controlled by the attacker, or conversely, deplete the stock of a key product, severely disrupting the supply chain.

- Customer Support and Sensitive Data: A customer support agent with access to customer records (personally identifiable information, order history) could be exploited. A sophisticated prompt injection could cause it to exfiltrate customer lists and their sensitive data to an external service controlled by the attacker.

- Code Deployment and Infrastructure: A Continuous Integration/Continuous Deployment (CI/CD) agent with administrator privileges on production servers represents a critical target. If compromised, an attacker could use it to deploy malware, backdoors, or modify security configurations, granting persistent access to the infrastructure.

Meta AI’s article, “Practical AI Agent Security”, highlights the importance of considering agent “capabilities”. Poor management of these capabilities, such as granting excessive privileges, can directly lead to unintended or malicious actions, perfectly echoing these attack scenarios.

Architectural strategies and principles to mitigate risks

AI agent security must be integrated by design. Here are the fundamental architectural principles:

- Principle of Least Privilege: This is the cornerstone of any secure architecture. An agent should only have the permissions strictly necessary to perform its task and nothing more. Meta AI emphasizes this point by recommending to “limit agent capabilities to what is necessary to accomplish their task,” thereby reducing the attack surface in the event of a compromise.

- Segmentation and Isolation: Isolate agents and their execution environments. Critical agents should operate in secure enclaves, separated from other systems, to contain damage in the event of a breach.

- External Checks and Human-in-the-Loop: For actions deemed sensitive or high-risk (e.g., financial transactions, critical configuration changes), require validation by an independent external system or human approval. Meta AI’s article underscores the importance of a “human-in-the-loop” for high-risk actions, ensuring essential oversight.

- Backups and Recovery: Implement robust backup and restoration mechanisms to minimize the impact of a compromise and allow a rapid return to a functional state.

- Auditing and Logging: Record all agent actions, resource accesses, and interactions. Regular log audits are essential to detect anomalies, attack attempts, and suspicious behaviors.

- Rate Limiting and Circuit Breakers: Prevent agents from performing an excessive number of actions in a short time (rate limiting) or from continuing to operate in the event of abnormal behavior or repeated failures (circuit breakers). These mechanisms act as security fuses.

- Input and Output Validation: Rigorously filter and validate all data processed by the agent, both incoming (prompts, external data) and outgoing, to prevent injections and information leaks.

Practical recommendations for implementation

To turn these principles into concrete actions, here are 7 practical recommendations:

- Define Granular Privilege Policies: Use robust Identity and Access Management (IAM) systems (e.g., AWS IAM, Azure AD, Google Cloud IAM) to assign specific roles and permissions to each agent, strictly following the principle of least privilege. Each resource (database, API, service) must have well-defined access policies.

- Implement Security Gateways: Interpose validation services or API Gateways between the agent and critical systems. These gateways can filter requests, enforce security policies, perform additional authorization checks, and validate data formats before they reach underlying systems.

- Integrate Human Approval Points: For any action modifying sensitive data, having a financial impact, or irreversible consequences, configure workflows requiring manual human approval before execution. Workflow orchestration tools can facilitate this integration.

- Use Containerized and Immutable Environments: Deploy agents in containers (Docker, Kubernetes) with immutable configurations. This reduces the attack surface, ensures environment consistency, and facilitates quick rollbacks in case of problems.

- Implement Active Monitoring and Alerts: Use SIEM (Security Information and Event Management) tools or monitoring platforms (Splunk, ELK Stack, Datadog) to aggregate agent logs, detect suspicious behaviors (unauthorized access attempts, abnormal operation volumes), and trigger real-time alerts.

- Conduct Regular Security Audits and Penetration Tests: Do not wait for an attack to test resilience. Simulate attacks (pentests) and conduct regular security audits on the agent’s code and infrastructure to proactively identify vulnerabilities and validate the effectiveness of security controls.

- Implement Rapid “Rollback” Mechanisms: Ensure the ability to quickly revert to a stable previous state in the event of a compromise or major agent malfunction. This includes regular backups of configurations and data, as well as deployment procedures that allow for easy rollbacks.

Conclusion

Highly privileged AI agents are powerful tools capable of transforming our operations. However, their potential comes with significant risks that cannot be ignored. As the Meta AI article points out, a thoughtful and proactive security architecture is absolutely paramount.

By adopting a rigorous approach — applying principles such as least privilege, segmentation, and integrating human checks and robust monitoring mechanisms — organizations can harness the power of AI while mastering the threats. AI agent security is not an option, but a necessity to build resilient, reliable, and trustworthy automation systems. It is time to invest in a secure architecture to protect our most valuable digital assets and ensure a serene future for intelligent automation.